Executive Summary

| The era of keyword volume SEO is over. AI-led search engines now gatekeep information and they cite sources, not pages. Brands that survive will be those that transform their websites from content hubs into verified knowledge layers that AI models are mathematically compelled to reference. |

The core argument in five points:

Over 57% of online content is now AI-generated, creating a “Sea of Sameness” that search algorithms actively penalise.

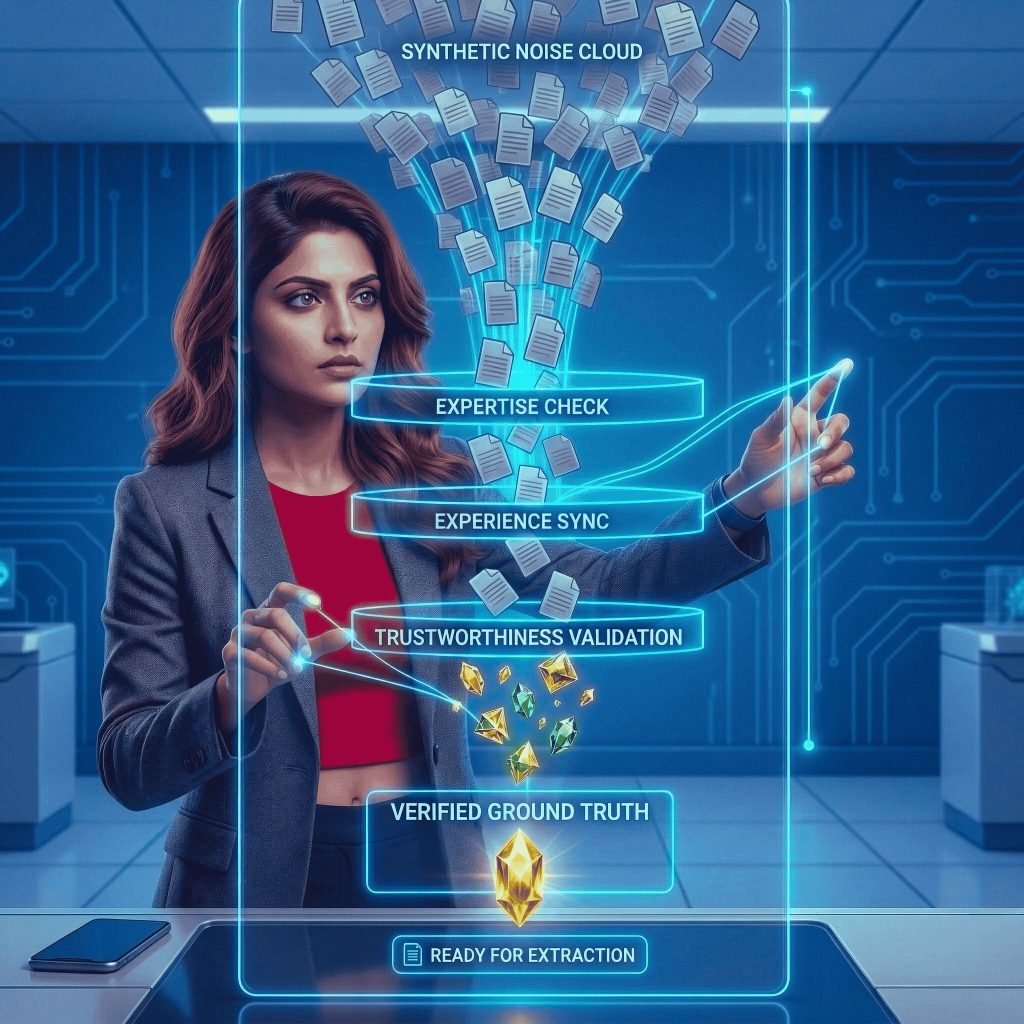

- LLMs trained on synthetic data degrade in quality over time, a phenomenon called Model Collapse — forcing search engines to hunt for verifiable, human-backed “Ground Truth.”

- Google’s patented “Information Gain Score” rewards content that introduces net-new knowledge. If an AI could have written your article, your score is effectively zero.

- The Verified Source Protocol is a three-pillar framework, Proprietary Data, Technical Verifiability, and Human Authority, that structurally positions your brand as a citable AI source.

- Brands that have adopted this framework report a 400%+ increase in AI citations and a 22% uplift in high-converting branded search within six months.

The Search Paradigm Has Fundamentally Shifted

A quiet crisis is playing out inside marketing teams across the digital commerce landscape. Organic traffic is declining, yet leaders cannot identify where those users went. The answer is straightforward: they stopped searching for links. They started asking machines for answers.

Large Language Models (LLMs), including OpenAI’s SearchGPT, Perplexity, and Google’s AI Overviews, have become the primary gatekeepers of information. This structural change has made the traditional playbook of high volume, keyword optimised content not merely ineffective, but actively harmful to brand credibility.

“The era of searching for links is dead. We have entered the era of asking machines for the truth.”

More than 57% of all online content is now generated or translated by artificial intelligence, producing a synthetic “Sea of Sameness.” Search algorithms recognise this, and they are aggressively filtering it out. To remain visible in an environment dominated by Answer Engine Optimization (AEO) and Generative Engine Optimization (GEO), eCommerce and B2B leaders must make a fundamental strategic shift: from Content Creation to Knowledge Architecture.

Why Model Collapse Matters for Your Brand

To understand the urgency behind the Verified Source Protocol, it is necessary to understand how AI models break down.

LLMs require vast quantities of human generated training data. However, as the open web fills with AI-generated content, these models increasingly ingest their own outputs, creating a feedback loop that data scientists call Model Collapse. Deprived of fresh, human verified data, AI outputs become progressively repetitive, inaccurate, and unreliable.

Search engines are responding defensively. Their ranking algorithms are being rewritten specifically to identify and surface “Ground Truth”: original, verified, human backed data that has not been synthetically hallucinated.

| Critical implication for brands: If your eCommerce site or corporate blog is recycling the same macro trends already published everywhere else, AI engines will treat you as redundant. You offer no grounding value. In the GEO era, if you are not a primary source, you are invisible. |

The Information Gain Mandate

The technical foundation of GEO is built on a concept called Information Gain. Originally a machine learning term, Google has now patented an “Information Gain Score” as an explicit component of its search algorithm. The score measures how much net-new knowledge a piece of content contributes relative to everything else already indexed on that topic.

A Practical Illustration

Consider a user asking an AI search engine: “What are the hidden API latency costs when migrating a monolithic eCommerce site to a composable architecture?”

- If your article covers the same high-level pros and cons as every other ranking page, your Information Gain score is zero. The AI has nothing new to cite.

- If your article includes a proprietary breakdown of API call latency across specific front-end frameworks, an interview with your lead system architect, and a custom five year total-cost-of-ownership dataset, your Information Gain score is significant. The AI recognises your page as introducing net-new knowledge to the internet.

74% more backlinks and citations earned by original research vs. standard content

In GEO, Information Gain is the primary currency of visibility. Acquiring it requires structuring digital assets to demonstrably prove Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) to a machine, not just a human reader.

What Is the Verified Source Protocol?

The Verified Source Protocol is a strategic framework for repositioning a website as a Grounding Data Layer, a structured repository of high density, fact-rich assets that AI models are mathematically compelled to cite when constructing accurate answers.

The shift in orientation is precise: instead of writing for a human reader scanning for keywords, the framework structures data for an AI agent hunting for verifiable facts. This is not a stylistic change. It is an architectural one.

The protocol rests on three non-negotiable pillars.

Pillar 1: Proprietary First-Party Data

Signals: Experience & Expertise

The governing principle of Pillar 1 is simple: if an LLM can write your article based on its existing training data, you should not publish that article. AI models prioritise “anchor facts,” unique, verifiable data points that validate their synthesised answers. Without them, your content provides no citation value.

Actionable Shifts

- Retire the how-to blog. Replace generic how-to guides with original research. If you sell industrial building materials, do not publish “How to Choose Drywall.” Publish “2026 Tensile Strength Stress Tests on Recycled Gypsum Boards.” The specificity is the value.

- Harvest internal data. Your company holds a goldmine of proprietary data. Anonymise customer purchasing trends, supply chain efficiency metrics, or customer service resolution times and publish them as structured industry reports.

- Commission primary research. Solicit direct responses from customers, suppliers, or industry practitioners. Survey data attributed to your brand becomes a primary, citable source.

| Key stat: Original research and proprietary data earn up to 74% more backlinks and citations than standard content. In the GEO environment, those citations are the primary mechanism of AI visibility. |

Pillar 2: Technical Verifiability

Signal: Trustworthiness

Possessing unique data is a necessary condition for GEO visibility, but not a sufficient one. If an AI crawler cannot parse, interpret, and verify that data, it cannot cite it. The website’s technical architecture must speak the native language of the machine.

Actionable Shifts

- Implement advanced Schema Markup. Basic product schema is no longer adequate. Implement advanced JSON-LD structured data: Dataset schema for proprietary research, ProfilePage schema to verify author credentials, and FAQPage schema mapped to the long-tail conversational queries users direct at voice assistants and AI search tools.

- Optimise for semantic HTML and vector readiness. AI search engines use vector embeddings to map the semantic relationships between concepts. Structure pages with clear, hierarchical HTML (H1–H3) that functions as a logical knowledge map. Ensure your highest value information gain hooks, unique statistics, data tables, and proprietary findings, appear early in the page and in machine readable formats.

- Audit and prune redundant content. Remove content that adds no information gain. Every additional page of undifferentiated content on your domain dilutes your overall authority signal.

Pillar 3: Human-in-the-Loop Authority

Signal: Authoritativeness

As the web becomes progressively more synthetic, “Proof of Humanity” is emerging as a critical ranking signal. AI models are increasingly cross-referencing content against off-page human validation hubs to confirm that an author is a real, credentialled expert rather than an AI persona.

Actionable Shifts

- Establish digital signatures for authors. Every piece of content must be attributable to a verified human entity. Link author bios directly to active LinkedIn profiles, academic publications, peer-reviewed citations, or verified professional credentials.

- Activate community validation. Platforms including Reddit, Quora, and LinkedIn are receiving elevated algorithmic weighting because they represent authentic human discourse. Subject matter experts must actively participate in these forums, citing your proprietary data where relevant. When an AI model detects community consensus around your data, it elevates your Trustworthiness score accordingly.

- Reference authoritative external sources. Where substantive topics are covered in your content, cite the primary academic and industry studies that support your claims. LLMs cross reference citations; citing legitimate sources improves your own perceived reliability.

Protocol in Action: A B2B Industrial Case Study

Consider a mid-market industrial manufacturing and B2B eCommerce brand whose marketing agency had, for years, produced generic SEO content: “Top 5 Uses for Industrial Abrasives,” “How to Maintain Your Manufacturing Equipment.” Traffic was declining rapidly as AI-generated overviews displaced these articles from search results.

The brand adopted the Verified Source Protocol and made three structural changes:

They replaced generic blog posts with in-depth technical whitepapers authored by senior floor engineers, covering subjects such as “Thermal Friction Reduction Algorithms in High Speed Machining.”

They wrapped this data in advanced Dataset and Article schema markup, ensuring AI crawlers could parse and attribute the findings.

They linked every author to verified LinkedIn profiles and documented engineering credentials, establishing human authority signals.

Outcomes at Six Months

400%+ increase in citations across Perplexity, Claude, and Google AI Overviews

22% surge in high-converting, branded search volume

Overall vanity traffic declined by 15% as low intent clicks dropped away. But the citations that mattered, those from procurement managers asking AI tools highly specific, high intent questions about thermal friction, pointed directly to the manufacturer’s white papers as the definitive source.

| The brand stopped competing for clicks and started monopolising the AI’s “Ground Truth.” That distinction is the difference between traffic and revenue. |

Using AI to Build the Protocol

The Verified Source Protocol does not advocate abandoning AI in content workflows. The objective is to deploy AI strategically to accelerate the construction of your knowledge architecture. Three high leverage applications:

- Mining unstructured internal data. Use secure, private LLMs to process sales call recordings, customer support transcripts, and technical manuals. These tools can extract the most specific and recurring questions your buyers ask, converting raw conversation into structured, authoritative FAQ content.

- Gap analysis for Information Gain. Before creating any new digital asset, feed the top 10 existing search results into an AI tool. Prompt it: “Analyse these articles and identify the core technical concepts, data points, or strategic perspectives that are absent.” The output becomes your information gain blueprint.

- Automating schema generation. Use AI to generate the JSON-LD Schema markup required to make your proprietary data interpretable to search engine crawlers. This removes a significant technical barrier and ensures consistent, accurate structured data across your entire content library.

Conclusion: The Intelligence First Imperative

The rules of digital visibility have been rewritten. Winning by out publishing competitors on volume is no longer a viable strategy, it is a liability. In the era of AI-led search, the winners will be the brands that are verified, proprietary, and authentic.

If your digital commerce strategy still centres on keyword density and content cadence, you are optimising for a search environment that ended in 2024. The game now is to make your website an authoritative, machine-readable repository of unique knowledge that AI engines have no choice but to cite.

The Verified Source Protocol provides that path. It is the transition from being a participant in the Sea of Sameness to becoming the source that the algorithm depends upon.

| The defining question for any digital commerce brand in 2026: Is your website a piece of content, or is it a verified source of truth? |

Further Reading: The State of SEO in 2026: AI Search, Agentic Commerce & Enterprise Visibility

About PracticeNext

PracticeNext specialises in AI-Native Digital Growth Systems, helping eCommerce and B2B leaders transition from legacy SEO to advanced AEO and GEO architectures. We help build verified authority for the intelligence-first era.