A Technical and Strategic Analysis for Commerce Leaders, CTOs, and Platform Architects

Executive Summary

The history of eCommerce technology is a history of friction elimination, from monolithic on premise servers in the 1990s, through micro-services and headless architectures, to the composable platforms of the early 2020s. Every cycle reduced latency, improved personalisation, and extended reach. Each was, fundamentally, evolutionary.

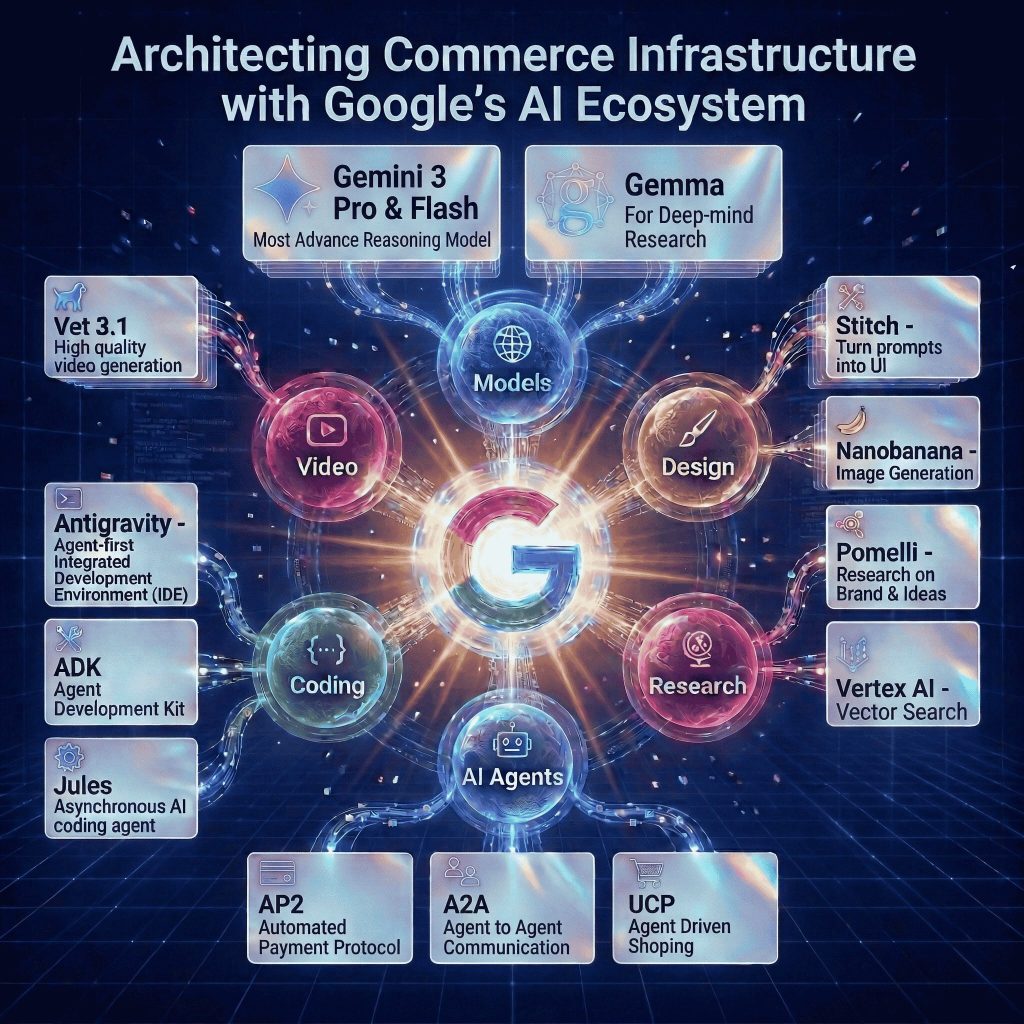

Google’s Full Stack AI Ecosystem represents a genuine architectural discontinuity: a shift from systems that serve human decisions to systems that make them. We are moving from Headless Commerce, where frontends decouple from backends for flexibility and speed, to Agentic Commerce, where software can perceive context, reason across data sources, plan multi-step workflows, and execute transactions autonomously, without waiting for a human to act.

This is not a roadmap aspiration. It is happening now, at scale, across some of the largest commercial platforms in the world, with measurable, compounding commercial outcomes that leave no room for ambiguity about the direction of travel.

| +21.4% CTR Uplift Gemini Flash ad copy vs. static variants | +37% ROAS Improvement Myntra Full-funnel Google AI ad solutions | −40% Operating Cost Reduction Carrefour post-cloud migration to GCP |

| −85% Build Cost Reduction Adya • Fine tuned LLMs on Google Cloud | 85% Trend Forecast Accuracy Stitch Fix • Generative inventory intelligence | +40% AOV Increase Stitch Fix • AI-driven personalisation |

This report provides a structured technical and strategic analysis of the Autonomous Commerce Stack, from its cognitive infrastructure base to its generative experience surface and anchors each architectural layer in demonstrated commercial outcomes from Flipkart, Myntra, BigBasket, Walmart, Carrefour, Etsy, and Stitch Fix. It closes with a three phase implementation roadmap and a governance framework calibrated to the specific risk profile of agentic systems.

The conclusion is plain: by 2026, the competitive moat in digital commerce will not be the quality of your storefront. It will be the quality of your protocols. The businesses that position their systems to be discoverable, negotiable, and trustworthy to autonomous agents will capture a disproportionate share of AI-mediated commerce volume. Those that do not will find their effective distribution shrinking as the channel mix shifts in ways their current architecture cannot address.

The Cognitive Infrastructure Layer

Every intelligent action in the Autonomous Commerce Stack originates in the cognitive infrastructure layer. Raw inputs, user queries, product data, transactional history, real-time signals, are transformed into structured reasoning and executable decisions. Understanding how Google’s Gemini 3 model family is differentiated by design is the essential starting point for any CTO making deployment decisions in this environment.

1.1 The Intelligence Bifurcation: Speed vs. Deep Reasoning

A defining architectural principle of the 2026 stack is the deliberate separation of AI compute into two operational modes: reflexive (fast, low-latency) and deliberative (deep, multi-step). Running the wrong model in the wrong context is one of the most common and commercially costly mistakes in enterprise AI deployment. Google’s Gemini 3 architecture resolves this with two purpose-built models.

Gemini 3 Flash is the high throughput engine for synchronous, user-facing interactions where response latency correlates directly with conversion rate. It is purpose built for real-time product search and ranking, dynamic ad copy generation, personalised category page headlines, instant FAQ resolution, and session level recommendations. The documented 21.4% CTR uplift from replacing static ad copy with Flash generated variants is a compelling benchmark, but it understates the compounding value when Flash is deployed across the full consumer facing surface of a large platform. Its computational efficiency means inference costs remain manageable even when every user interaction, from search query to checkout prompt, is AI-mediated, making truly pervasive AI-native commerce economically sustainable at scale.

Gemini 3 Pro provides the deliberative layer. Optimised for complex, multi-step workflows requiring planning, conditional logic, tool use, and deep context management across long input windows, Pro is the brain behind autonomous agents, the model that receives a high level instruction and decomposes it into a dependency graph of executable sub-tasks, delegating each to the appropriate specialised tool or sub agent. It also powers coding agents like Jules (Part IV) and multi step supply chain reasoning, where higher latency is an acceptable trade-off for the depth and reliability of the output.

| FLASH vs. PRO: ARCHITECTURAL DECISION GUIDE |

| Flash: Real-time UX • Search ranking • Ad copy • Session recommendations • FAQ resolution • Any high-volume, latency-sensitive inference |

| Pro: Agentic orchestration • Multi-step workflow planning • Autonomous code generation • Complex business logic • Long-context document analysis |

| Key principle: Architect the routing layer first. Running Pro everywhere is cost-prohibitive. Running Flash on tasks requiring deep reasoning is reputationally dangerous. |

Further Reading: The Intelligence Layer: Why Your Commerce Stack is Already Obsolete

1.2 Grounded Intelligence: Vertex AI Search for Retail

The most capable generative model is only as trustworthy as the data it can access. An LLM trained on the open web will hallucinate product details, invent availability, and confabulate pricing, all with confident fluency. Vertex AI Search for Retail is the architectural antidote: a managed vector database that ingests a retailer’s live catalogue and makes it semantically queryable by Gemini. When a user asks for “breathable workout tops under ₹1,500 for running and yoga,” the system retrieves verified results from the live catalogue and passes them as grounded context to the model. The model reasons; the retrieval system grounds.

This Retrieval Augmented Generation (RAG) architecture simultaneously eliminates hallucination on product data, ensures responses reflect current inventory and pricing, and removes the need for continuous model retraining as catalogues evolve. In production, Vertex AI sits within a composable stack: Shopify Plus or commercetools managing transactional logic; a headless CMS like Contentstack feeding structured product data into the Vertex AI index; and Next.js or Vue.js providing performant, SEO optimised rendering. Each layer is independently scalable and auditable, the practical meaning of “composable commerce.”

1.3 Edge Intelligence: Gemma

Gemma, Google’s family of lightweight open weight models, enables on device inference for CTOs navigating data sovereignty regulations, connectivity constraints, or latency requirements that cloud hosted models cannot meet. In commerce, the primary application is privacy preserving visual search: a Gemma model running directly in the user’s browser or mobile app enables real-time product recognition from a photograph without the query image ever leaving the device.

For markets with inconsistent mobile connectivity, large parts of India, Southeast Asia, and Sub Saharan Africa, on device intelligence is an access requirement, not a premium feature. Gemma’s open weight distribution also allows enterprises to fine tune and deploy within their own infrastructure, maintaining full control over model behaviour.

The Agentic Inter Operability Protocol

If the cognitive infrastructure is the brain of the Autonomous Commerce Stack, the agentic protocols are its nervous system, defining how agents communicate with each other, how businesses expose commerce capabilities to AI systems, and how financial transactions are authorised without direct human intervention at each step. Three protocols form this connective tissue: Agent2Agent (A2A), the Universal Commerce Protocol (UCP), and the Agent Payments Protocol (AP2). Together, they describe a new commercial internet built not for browsers, but for agents.

2.1 Agent2Agent (A2A): The Universal Translator

Without a shared communication standard, agents built on different frameworks, Google ADK, LangGraph, CrewAI, AutoGen, require bespoke integration work to coordinate. This is the API fragmentation problem of the 2010s, replicated at the agent layer. A2A solves it by defining a standard mechanism for agent discovery, capability negotiation, and task delegation over HTTP/JSON-RPC.

Each agent publishes an “Agent Card”: a structured JSON document declaring its capabilities, supported input/output formats, authentication requirements, and constraints. When a Shopify native agent needs to coordinate with a LangGraph logistics agent, the A2A handshake allows both parties to discover each other’s capabilities, negotiate the appropriate interaction modality, downgrading from a rich UI carousel to plain text if the receiving agent is voice only and establish a trusted session. A2A is, in the most precise sense, the HTTP of the agentic web: a universal translation layer that makes heterogeneous agent ecosystems commercially functional.

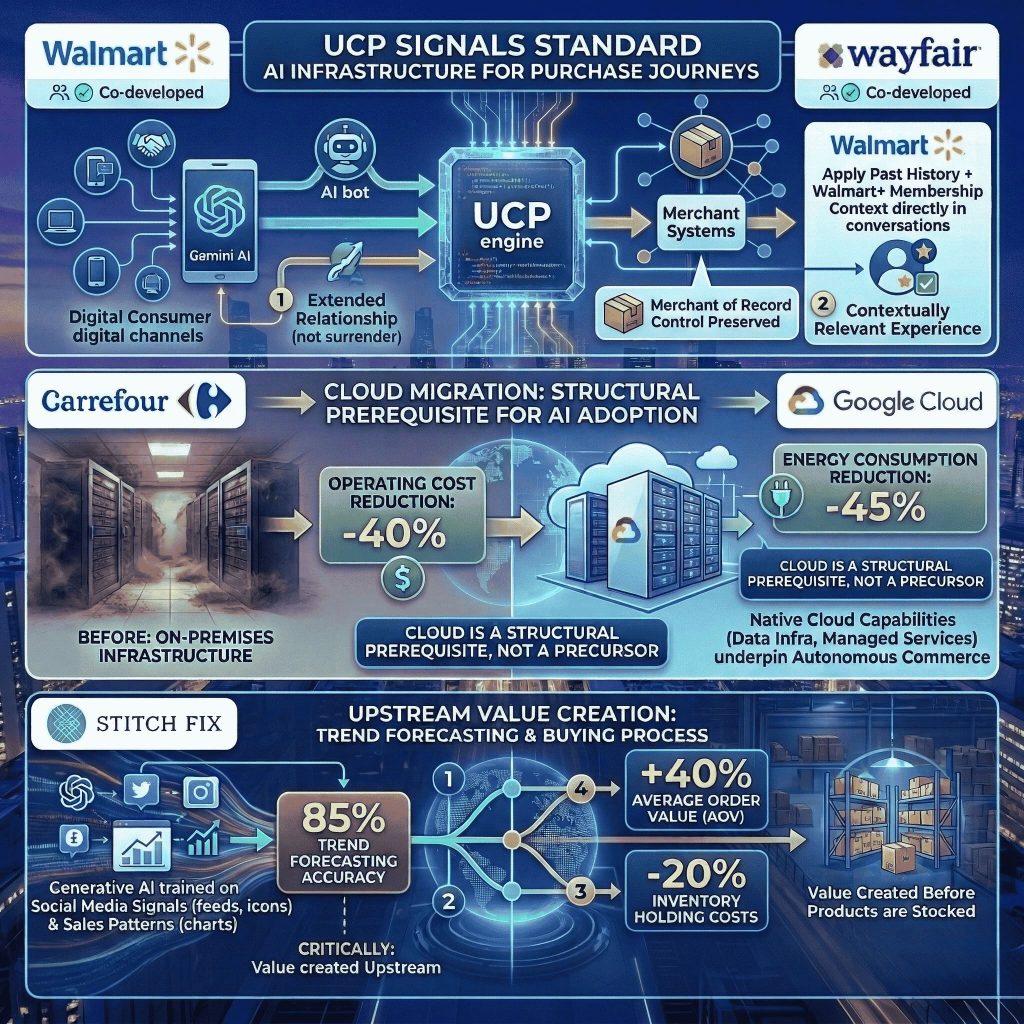

2.2 Universal Commerce Protocol (UCP): Making Stores Agent Readable

Most online stores are built for human browsers: product data behind JavaScript rendered pages, checkout flows requiring form interactions, business logic embedded in visual UI. An autonomous shopping agent navigating this environment faces structural invisibility. UCP transforms the store from a human navigable interface into a machine-readable protocol layer.

Co-developed by Google, Shopify, Wayfair, and Walmart, UCP defines a standard by which merchants expose their commerce capabilities, catalogue search, inventory availability, cart management, checkout initiation, as structured, agent readable endpoints. Critically, UCP exposes capabilities (what the store can do) rather than hard coded API routes (how its systems happen to be structured). Any A2A compliant agent can discover and transact with any UCP compliant store without prior knowledge of its backend architecture. A UCP compliant store becomes a node in a global agent commerce network, discoverable by any AI assistant searching for the capabilities it provides. A store that is not UCP compliant is, increasingly, invisible to agent mediated commerce. This is a distribution decision with multi-year strategic consequences.

Futher Reading: Universal Commerce Protocol: The HTTP for AI Driven Shopping

2.3 Agent Payments Protocol (AP2) and the Trust Layer

AP2 makes delegated financial agency cryptographically verifiable at commercial scale by introducing Mandates, digitally signed contracts that precisely scope an agent’s spending authority: the merchant, spending limit, transaction category, validity window, and confirmation thresholds. An agent cannot act outside its Mandate; any attempt is rejected before the payment rail is engaged.

This architecture enables a consumer to authorise an AI grocery assistant to spend up to ₹2,000 weekly on BigBasket without authorising expenditure anywhere else, or a procurement agent to execute purchase orders up to ₹50,000 with approved suppliers without sign-off on each transaction.

AP2 functions as the trust and authorisation layer; actual fund movement continues through established gateways, Adyen, Stripe, Checkout.com, PayPal, which handle regulatory compliance, fraud detection, and settlement. PayPal’s live integration with both UCP and AP2 provides a production proof of concept: merchants using PayPal’s agentic checkout can offer fully conversational purchase flows, with AP2 providing Mandate verification and fraud controls that make autonomous transactions secure at scale.

2.4 India’s UPI Agentic Payments: National Scale Deployment

While AP2 builds the global standard, India is demonstrating agentic commerce at national scale through innovations in its Unified Payments Interface. UPI Reserve Pay allows consumers to pre authorise merchant specific spending balances from which transactions are debited without per transaction PIN or OTP confirmation. UPI Circle extends this to delegated payment authority within defined spending scopes precisely the consent architecture agentic commerce requires.

Razorpay, in collaboration with the National Payments Corporation of India (NPCI) and OpenAI, is piloting an agentic checkout framework in which consumers interact conversationally with an AI to place orders, with the agent executing payment autonomously via the UPI stack within pre authorised limits.

BigBasket’s production deployment is the clearest proof of concept for the fully autonomous commerce loop: a user tells the AI assistant to order their usual weekly groceries; the agent queries order history, checks availability, constructs the basket, and completes payment without the user leaving the conversational interface. This is a production capability on one of India’s largest grocery platforms, not a pilot with a controlled user group. CTOs who consider agentic commerce a 2027 or 2028 problem should recalibrate that timeline now.

The Generative Experience Layer

The consumer facing surface of the Autonomous Commerce Stack is a generative stream, visual assets, video content, and interfaces produced dynamically in response to individual user context and real-time signals, rather than designed once and published. Four tools define this layer in Google’s 2025–2026 ecosystem, each addressing a distinct bottleneck in the content production pipeline.

3.1 Nano Banana Pro: Dynamic Creative Optimisation 2.0

Dynamic Creative Optimisation has been a standard performance marketing tool for over a decade. Nano Banana Pro does not merely improve DCO; it restructures its economics entirely. Built on Gemini 3 Pro Image, it introduces Character Consistency and Style Locking, ensuring a virtual product model and brand design language remain precisely consistent across thousands of generated variants. A product can be placed in a Diwali gifting context for one user, a minimalist white background studio setting for another, and a relevant urban lifestyle environment for a third, with each variant maintaining precise product fidelity and brand compliance.

The commercial implication is a structural reshaping of creative production economics. A traditional photoshoot for a fashion brand might generate 50 to 100 usable images over two days at significant cost. Nano Banana Pro can generate thousands of contextually personalised, culturally resonant variants in the time it takes to write the prompts. For platforms operating across multiple languages, cultural contexts, and regional markets, every major Indian platform and most large global retailers, this is a category level competitive advantage, not an incremental efficiency gain.

3.2 Veo 3.1: Generative Video at Commerce Scale

Video consistently delivers the highest engagement and conversion metrics across eCommerce categories, and the highest production cost and longest lead time. Veo 3.1 directly targets this constraint by generating 1080p video clips with natively synchronised audio from static product images and text prompts. The synchronised audio capability, which distinguishes Veo 3.1 from earlier generative video tools, eliminates the post production audio layering step that has historically made video production non trivial to automate.

The most commercially significant application is personalised CRM video at scale: instead of a single promotional video deployed to an entire subscriber list, a retailer can generate individually tailored video messages, referencing a customer’s recent purchase history, browsing behaviour, or loyalty tier, rendered and dispatched at CRM trigger time rather than days earlier in a batch production process. This transforms video from a campaign-level asset into a real-time customer engagement tool.

3.3 Stitch and Pomelli: Code Generation and Brand Governance

Stitch collapses the design to production gap by generating production-ready React and Tailwind CSS code directly from natural language descriptions or wireframes, with bidirectional Figma integration. A/B tests that previously required a full engineering sprint can be generated, reviewed, and deployed within a day. The constraint on experimentation velocity shifts from engineering capacity to hypothesis quality, which is precisely the right constraint. Stitch also simplifies localisation: generating component variants for different languages, reading directions, and cultural conventions becomes a prompt rather than a sprint.

Pomelli addresses the brand erosion risk that generative scale introduces. When hundreds of thousands of assets are generated by AI systems, the consistency that defines a brand’s visual identity can degrade rapidly without systematic governance. Pomelli scans a retailer’s digital estate to extract a “Business DNA” profile, colour palette, typography, tone of voice, compositional conventions, applied as a constraint layer to all subsequent generative outputs. In regulated commerce categories, financial products, healthcare, food and beverage, Pomelli’s tone of voice governance is not optional; it is a compliance requirement.

Further Reading: 2026 State of UI/UX Design for Digital Commerce: From Interface to Intellect

Autonomous Engineering Operations

The agentic transformation of the commercial enterprise does not stop at the consumer facing layer. The engineering infrastructure that builds, maintains, and evolves digital commerce platforms is itself becoming autonomous, a dimension of the shift that is less publicly discussed but commercially significant.

4.1 Antigravity and Jules

Antigravity replaces the traditional IDE with an Agent Manager interface through which a lead engineer spawns, monitors, and directs multiple autonomous coding agents working in parallel on distinct tasks. Verification shifts from reviewing raw code diffs to assessing structured Artifacts, generated summaries, test coverage reports, screen recordings of UI behaviour, and change impact analyses produced by each agent. The cognitive task of the senior engineer evolves from writing and reviewing code to evaluating agent reasoning and approving architectural decisions.

A five person engineering team using Antigravity can sustain the throughput of a twenty person conventional team, not by replacing engineers but by multiplying each engineer’s effective output through parallel agent delegation.

Jules is the asynchronous coding agent within this ecosystem. Powered by Gemini 3 Pro and executing within isolated secure cloud VMs, Jules receives a task, clones the relevant repository, implements the change, writes and runs test suites, and opens a pull request with a structured summary, without human interaction during execution. It operates without cognitive load constraints or working hours limitations.

The governance implication is important: Jules’ autonomy makes robust code review processes more important, not less. Every Jules-generated PR must pass through the same review gates as human-authored code. The efficiency gain is in generation speed, not review bypass.

4.2 LLM Observability: The Non Negotiable Governance Layer

Deploying autonomous agents in production commerce environments without robust observability is equivalent to running a data centre without logging. Standard application performance monitoring tools are architecturally blind to LLM specific failure modes: hallucination, reasoning drift, prompt injection, context window overflow, and inconsistent tool invocation.

LangSmith and Arize Phoenix, both natively integrated with Google Vertex AI, provide end to end tracing of agent execution chains. LangSmith allows CTOs to replay and audit any agent decision at the prompt level, answering why the agent recommended this product or what reasoning chain led to this purchase decision. Arize Phoenix extends this with statistical drift detection, alerting teams when agent behaviour diverges from baseline even when individual transactions appear to complete successfully. The rule is simple: deploy observability before you deploy autonomous agents in production.

4.3 Supply Chain Intelligence

Multi agent orchestration extends to fulfilment through platforms like Project44, which deploy AI disruption detection and data quality agents to monitor in-transit shipments across carrier networks in real time. These agents autonomously identify transit delays before they become consumer-visible exceptions, resolve carrier data discrepancies without human intervention, and trigger proactive customer communications when exceptions cannot be resolved.

For platforms where a single peak trading day involves hundreds of thousands of active shipments, the labour cost of manual monitoring is prohibitive and the coverage is incomplete. Autonomous supply chain agents make comprehensive shipment intelligence economically viable at any scale, ensuring the post-purchase experience matches the quality of the pre-purchase one.

Strategic Implementation & Governance

Architectural clarity about the Autonomous Commerce Stack is necessary but not sufficient. The implementation question, how to sequence the transition, manage organisational change, and govern autonomous systems responsibly, is where strategy becomes reality.

5.1 The Three Phase Migration Roadmap

| PHASE 1: COGNITIVE FOUNDATION (Months 1–3) |

| Deploy Vertex AI Search for Retail as the semantic retrieval layer. Target: measurably improve search relevancy and eliminate zero result queries against baseline. |

| Replace static ad copy and category descriptions with Gemini 3 Flash generated variants. Instrument A/B testing. Target: quantify CTR and conversion uplift. |

| Implement Pomelli brand profiling. Generate the Business DNA profile and apply as a constraint layer to all subsequent generative tools. |

| Deploy LangSmith or Arize Phoenix for LLM observability. Establish performance baselines before expanding agentic scope. |

| PHASE 2: AGENTIC FOUNDATION (Months 4–9) |

| Implement UCP endpoints on the commerce backend. Begin with read only capabilities (catalogue discovery, availability checks) before enabling transactional endpoints. |

| Deploy Antigravity for the engineering team. Establish code review controls and merge gates for Jules-generated pull requests. |

| Implement A2A for internal agent coordination. Initial use case: customer service agent to agent escalation with full context transfer. |

| Launch Nano Banana Pro for performance creative. Pilot Veo 3.1 CRM video on a defined customer segment and measure engagement against static creative control. |

| PHASE 3: AUTONOMOUS ENTERPRISE (Months 10+) |

| Enable AP2 for agentic checkout on high velocity, low friction categories. Implement Mandate scoping and per category spending controls. |

| For Indian deployments: integrate UPI Circle/Reserve Pay for pre authorised agentic payment. Pilot with grocery or FMCG subscription replenishment flows. |

| Deploy A2A agents for cross platform selling, exposing UCP endpoints to the Gemini interface and third party agent ecosystems. |

| Integrate Project44 or equivalent for autonomous supply chain exception management and proactive consumer communications. |

5.2 Security and Governance

The shift to agentic systems introduces two primary attack vectors requiring proactive defence. Prompt injection embeds malicious instructions in content an agent is expected to process, a product description, supplier document, or customer review, causing the agent to deviate from its Mandate, reveal sensitive account data, or introduce vulnerabilities into a production codebase. Data poisoning targets the retrieval or training data that grounds agent decisions, systematically skewing behaviour in commercially damaging ways that are difficult to detect without active monitoring.

Effective governance requires four structural elements: formally defined Mandates for every agent authorised to execute financial transactions or modify data; immutable, human readable audit trails of all agent actions; a regular adversarial red-teaming cadence using the OWASP Top 10 for Agentic Applications and the AgentHarm Benchmark; and rehearsed incident response playbooks for agentic failure scenarios. Google Agentspace provides the infrastructure layer, isolating agent execution within enterprise VPC perimeters, enforcing IAM-based role access controls, and maintaining comprehensive agent action logs. LangSmith and Arize Phoenix provide the monitoring layer that makes data poisoning and reasoning drift detectable before they cause significant damage.

Real World Validation & Case Studies

Architecture is most compelling when anchored in demonstrated commercial outcomes. The case studies below represent the most substantive public evidence available for the commercial viability of the Autonomous Commerce Stack across markets, categories, and scale levels.

6.1 India: The World’s Most Advanced Agentic Commerce Testbed

India’s position as the leading laboratory for the Autonomous Commerce Stack reflects a convergence of structural advantages that rarely coincide in a single market: 600 million+ active digital users, a nationally deployed real-time payments infrastructure (UPI) processing over 13 billion transactions monthly, regulatory willingness to pilot novel financial frameworks, and technology native commerce companies competing with sufficient intensity to pursue aggressive platform bets. The case studies below are not isolated experiments, they are systematic, production scale deployments.

Flipkart is an officially listed UCP ecosystem partner, signalling a distribution infrastructure commitment rather than a feature decision. By ensuring its catalogue remains agent accessible as AI-mediated commerce journeys grow, Flipkart is positioning itself as a preferred destination in a channel that does not yet account for the majority of its volume but will. Its agentic AI chatbots demonstrate the stack’s value in absorbing high volume support interactions without proportional cost scaling, while Gemini powered semantic search resolves complex, intent-rich queries that keyword-based systems cannot handle.

Myntra’s 37% ROAS improvement through full funnel Google AI ad solutions reflects the compounding effect of AI-mediated improvements at every funnel stage: more precisely targeted impressions, more contextually relevant creative at scale, more accurate conversion attribution, and more intelligent bid management. The 37% is the aggregate of dozens of individually marginal improvements that only become significant in combination. This compounding dynamic is the signature of AI-native performance marketing, and it is why the gap between early adopters and later movers tends to widen rather than converge. The “My Stylist” feature transforms the platform’s value proposition from a product catalogue to a personal style advisor, democratising a service previously available only to high-value customers willing to engage a human stylist.

BigBasket is the reference architecture for autonomous commerce: demand forecasting across thousands of SKUs; computer vision for quality control; conversational agents for natural language order placement; and agentic UPI payment completing the purchase loop without human intervention. Grocery is one of the operationally most demanding commerce categories, perishable inventory, time sensitive delivery, highly variable demand, near perfect availability expectations. BigBasket’s success under these constraints provides particularly strong evidence for the stack’s robustness under real-world operational stress.

Adya demonstrates that the stack is not exclusively for large enterprises. By fine tuning custom LLMs on Google Cloud with Gemini powered coding tools, Adya reduced model build costs by 85%, improved model performance by 21%, and accelerated time to market by 30%, then applied those gains to building AI-powered commerce tools for smaller Indian retailers. The Autonomous Commerce Stack, through Jules and Antigravity, creates leverage that allows small engineering teams to deliver at a speed and quality level previously associated only with large organisations.

6.2 Global Enterprise Validation

Walmart and Wayfair’s co-development of UCP signals that the protocol will become standard infrastructure for AI-assisted purchase journeys. Wayfair’s implementation preserves merchant of record control over fulfilment quality even when purchase is initiated within Gemini, demonstrating that UCP adoption extends the merchant’s relationship into a new channel rather than surrendering it. Walmart’s integration applies past purchase history and Walmart+ membership context directly within AI conversations, making the experience more contextually relevant than a generic catalogue browse.

Carrefour’s migration from on premises infrastructure to Google Cloud, delivering 40% operating cost reduction and 45% energy consumption reduction, illustrates a foundational point: the cloud migration is not a precursor to AI adoption; it is a structural prerequisite. The data infrastructure and managed services underpinning the Autonomous Commerce Stack are native cloud capabilities. Organisations on legacy infrastructure face a wider gap than the capabilities discussion alone suggests.

Stitch Fix’s 85% trend forecasting accuracy, achieved by training generative AI on social media signals and sales pattern data, generated a 40% increase in Average Order Value and 20% reduction in inventory holding costs. Critically, this value was created upstream in the buying process, before products were stocked. The Autonomous Commerce Stack’s impact extends across the entire commercial value chain, not only at the consumer touchpoint.

Conclusion: The Protocol Is the Product

Digital commerce has moved through three defining paradigms. First, the store was a website, a digital catalogue navigable by humans with browsers. Second, it became a platform, a composable architecture of APIs and micro services, optimised for developer flexibility and deployment speed. In the third paradigm, now actively forming, the store is a protocol, a machine readable declaration of capabilities, discoverable and transactable by autonomous agents operating on behalf of human principals.

This is not a metaphor. A UCP compliant store with AP2 authorised payment infrastructure is a fundamentally different kind of commercial entity, one that participates in agent mediated commerce flows with no human navigable interface at all. The businesses that architect their systems to be discovered, trusted, and transacted with by autonomous agents will capture a disproportionate share of AI-mediated commerce volume. Those that do not will find their effective distribution shrinking as the channel mix shifts in ways their current architecture cannot address.

The evidence from early adopters is consistent on one point: the commercial value of the Autonomous Commerce Stack is not theoretical, and the timeline for competitive consequence is not distant. The organisations structuring their roadmaps around this paradigm shift in 2025 are not early adopters taking a calculated risk. They are late majority organisations catching up with a transition that has already begun. The winning stack of 2026 is the one that makes your business legible, negotiable, and trustworthy to machines.

| KEY STRATEGIC IMPERATIVES |

| Treat UCP compliance as a distribution decision with multi year competitive consequences, not a feature decision. |

| Deploy LLM observability before you deploy autonomous agents in production. You cannot govern what you cannot trace. |

| Implement AP2 Mandates as the cryptographic trust layer for every agentic financial action. Spending authority must be scoped and verifiable. |

| Treat India as an 18-month preview of global commerce architecture. What BigBasket is doing today is the global standard in 2027. |

| he constraint is not technology. It is organisational capacity to absorb architectural change at the speed the competitive environment now demands. |

The PracticeNext Framework

About PracticeNext: We build custom, AI-native digital commerce infrastructure designed to quickly scale and accelerate your business growth.