A strategic blueprint for CXOs and digital leaders navigating the transition from click-based search to machine to machine discovery, agentic commerce, and AI-generated answer ecosystems.

Executive Summary

The rules of digital discovery have changed permanently. Search optimization is no longer about rankings; it is about becoming the answer.

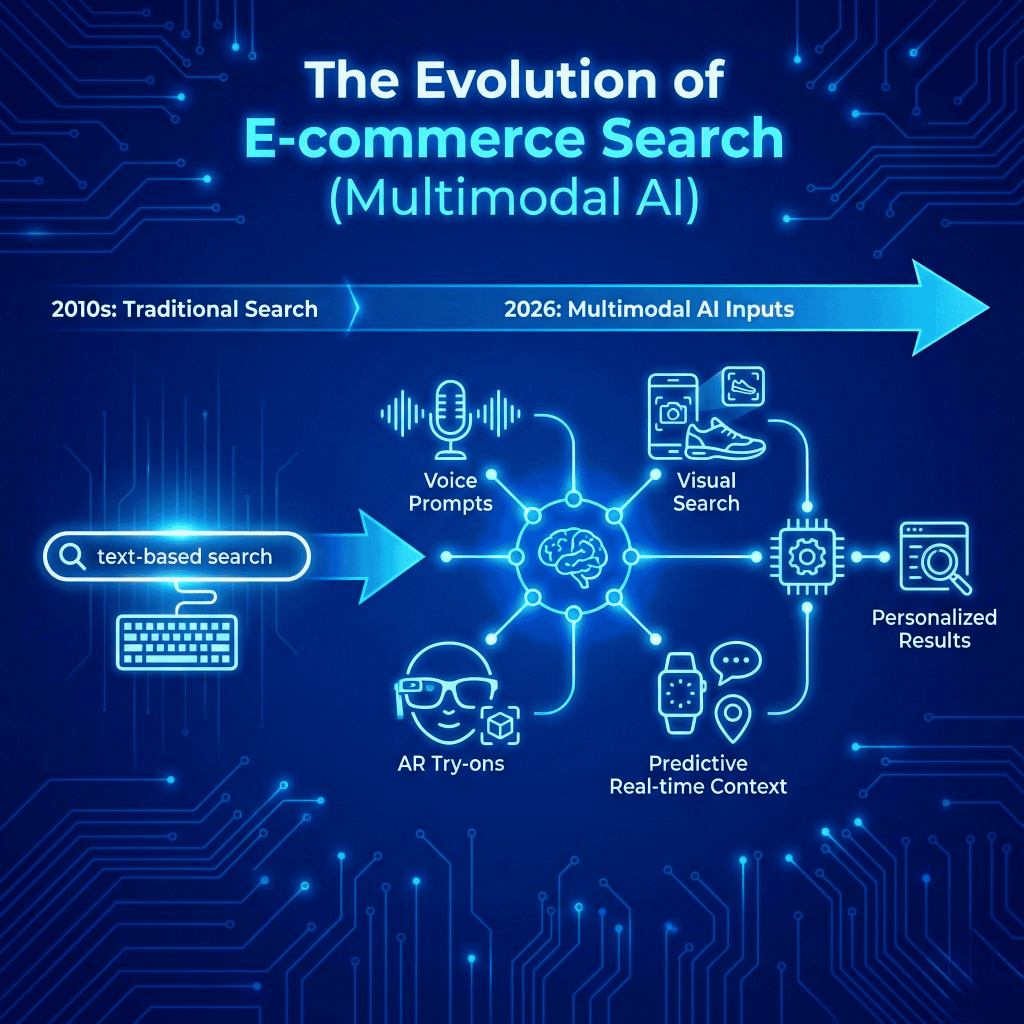

By 2026, the traditional model of search engine optimization, earning visibility through indexed page rankings and click throughs, has been structurally displaced. The new competitive arena is artificial intelligence: the large language models, autonomous shopping agents, and generative answer engines that now intercept consumer intent before a web page is ever visited.

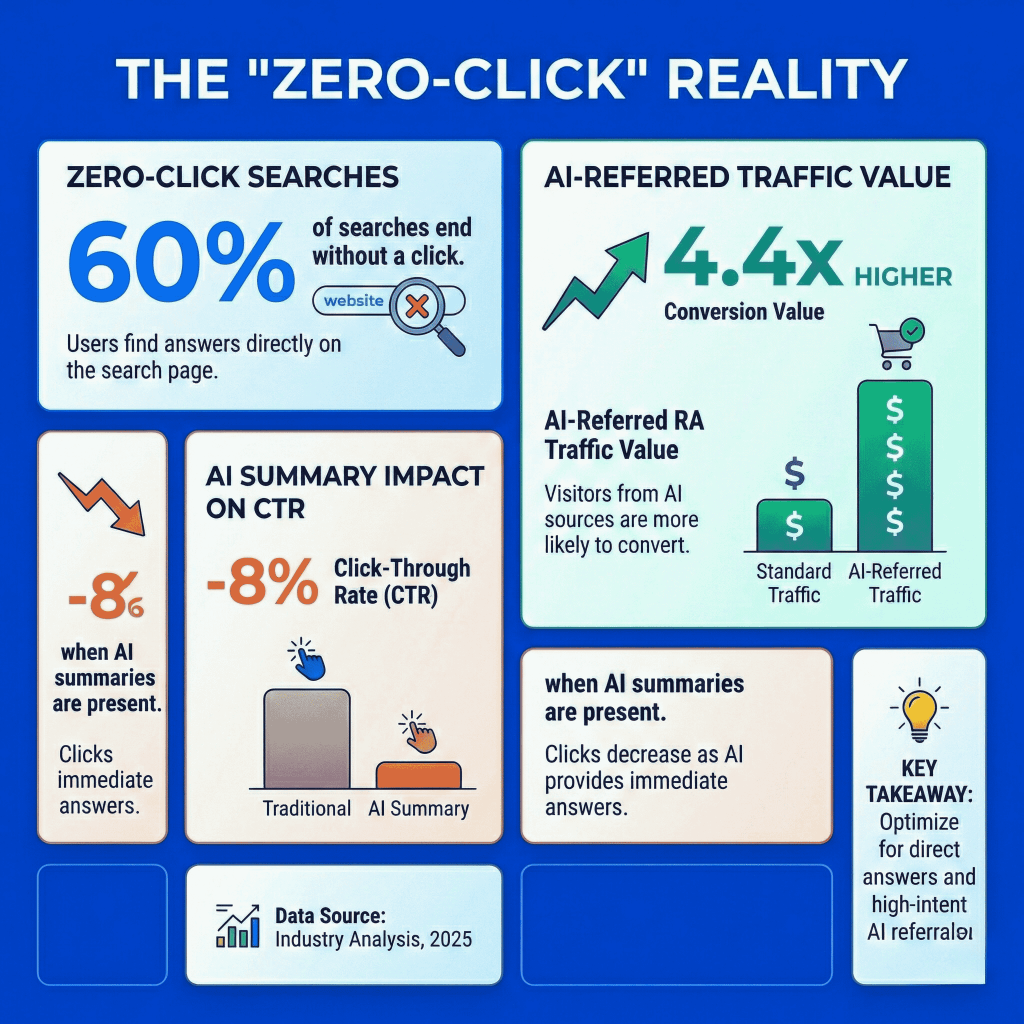

This transformation is not incremental. Roughly 60% of all search queries now end without a single external click. When an AI Overview or LLM generated response appears, organic click through rates collapse from 15% to 8%. Two billion people encounter Google’s AI summaries every month. ChatGPT alone serves 700 million weekly active users across more than five billion monthly visits.

For enterprise leaders, the question is no longer whether to adapt. It is how quickly the organization can restructure its content, data architecture, and measurement systems for a world in which machines, not humans, make the first discovery decision.

| The Core Strategic Imperative: Brands that fail to structure their digital presence for machine comprehension face zero-click extinction, organic traffic evaporating as autonomous algorithms bypass unstructured websites in favour of technically compliant competitors. This white paper provides the strategic blueprint to prevent that outcome and capture the compounding ROI of AI-driven discovery. |

The white paper covers nine interconnected domains: the data realities reshaping search behaviour; the disciplines of Answer Engine Optimization (AEO) and Generative Engine Optimization (GEO); the technical standards underpinning AI visibility; the evolution of trust and provenance via E-E-A-T and C2PA; the emergence of agentic commerce; proven ROI benchmarks; the India market as a global innovation laboratory; visual and social search convergence; and the new KPI framework that has replaced traditional rank tracking.

The Data Reality: How Search Behaviour Has Shifted

The numbers are unambiguous. AI-mediated discovery is no longer emerging; it has arrived.

Traditional search volume has declined by 25% year on year as users migrate to conversational AI interfaces. The consequence is the zero-click phenomenon: queries resolved entirely within the search engine or AI platform, with no outbound traffic to brand websites. This is not a temporary dip. It is a structural change in how information moves from source to consumer.

| 60% of searches end without a click | 2B monthly users of Google AI Overviews | 700M weekly active ChatGPT users | 4.4× higher conversion value from AI referrals |

The picture is nuanced, however, and contains genuine opportunity for brands that understand the mechanics. ChatGPT users click an average of 1.4 external links per session, nearly double Google’s 0.6 — and half of those clicks lead to business and commercial service websites. This means that when an AI platform does surface a brand, the resulting visitor is far more qualified: worth 4.4 times the conversion value of a traditional organic visitor.

Demographic momentum reinforces the trend. Approximately 35% of Gen Z consumers in the United States now use AI chatbots as their primary discovery tool, bypassing search engines entirely. This cohort will constitute the majority of online spending within the decade. Brands optimizing for AI citation today are building the infrastructure that will determine their market share for the next ten years.

| What This Means for Enterprise Strategy AI search is not destroying organic traffic, it is relocating it. Traffic that was broadly distributed across many websites is consolidating around the sources that AI platforms choose to cite. The strategic imperative is to become one of those cited sources, consistently, across multiple AI engines simultaneously. |

The trajectory is clear. AI search traffic is projected to surpass traditional organic traffic entirely by 2028. Organizations that treat this as a future problem are already two years behind the competitive curve.

AEO and GEO: The Two Disciplines That Define Modern Search

Answer Engine Optimization and Generative Engine Optimization are complementary, not competing. Together they deliver full funnel AI visibility.

The industry has converged on two distinct but interdependent optimization disciplines. Understanding the difference between them and executing both is the foundation of any credible AI search strategy.

Answer Engine Optimization (AEO): Winning Deterministic Features

AEO is the practice of structuring content so that search engines can extract it as a direct, definitive answer. Its primary targets are Google AI Overviews, featured snippets, and voice search responses contexts where the engine selects a single answer from a structured source.

The governing principle of AEO is deterministic formatting. Content must present a clear question followed immediately by a concise, two-to-four sentence answer. If a piece of content does not structurally resemble a direct response, search engines will not treat it as one. This is not a stylistic preference; it is a technical prerequisite.

Alongside content structure, JSON-LD schema markup is the other non negotiable pillar. Implementing FAQ, Product, Review, and Article schema gives AI systems machine readable confirmation of factual claims. This allows search engines to verify brand information without relying on interpretation. Consistent entity data, accurate Name, Address, and Phone (NAP) information across all directories and platforms, reinforces this verification layer.

| AEO Implementation Timeline: Most enterprise eCommerce brands can deploy foundational AEO structured content formatting and JSON-LD schema , within a 4–8 week sprint. Full catalog optimization across large product ranges typically requires 3–6 months. The work compounds: each schema compliant page incrementally strengthens the brand’s entity footprint across the broader web. |

Generative Engine Optimization (GEO): Winning Probabilistic AI Narratives

Where AEO targets structured extraction, GEO targets the conversational knowledge synthesis happening inside large language models: ChatGPT, Claude, Microsoft Copilot, Perplexity, and their successors. These systems do not match queries to schema tags. They probabilistically assemble narratives from billions of data points scraped from across the web.

This distinction changes the optimization strategy entirely. GEO is not about telling an AI what your brand is; it is about ensuring that enough third-party sources say the same accurate things about your brand that the model’s assembled picture of your entity is correct and prominent. Reviews, forum mentions, industry publication coverage, affiliate content, and analyst citations all contribute to how an LLM constructs a brand narrative.

For eCommerce and SaaS organizations, GEO execution requires identifying the high-value prompts that trigger commercial discovery, ‘what is the best CRM for enterprise sales teams?’ or ‘which running shoe has the highest cushioning under £150?’ and systematically seeding accurate, consistent brand data across every platform that LLMs crawl. Semantic relevance and topical authority replace keyword density as the primary optimization variables.

The financial case for combining both disciplines is robust. An integrated SEO and GEO strategy generates an average ROI of 317% over 12 to 18 months, roughly a 4.17× return on investment. Mid market eCommerce brands, typically in the £4M to £16M revenue range, consistently achieve even higher returns of 412% to 447%, benefiting from agile execution and cleaner conversion funnels.

The Technical Backbone: llms.txt and Machine Readable Catalogs

What robots.txt did for search crawlers, llms.txt does for AI language models and adoption is accelerating rapidly.

Large language models consume enormous volumes of web content to generate answers. But complex, JavaScript heavy eCommerce websites, built for human browsing , consistently cause AI crawlers to misread or skip critical product data. The result is hallucinated specifications, inaccurate pricing, and missing inventory details appearing in AI-generated responses. The llms.txt protocol exists to solve this.

What Is llms.txt?

An llms.txt file is a lightweight, Markdown formatted directory placed at the root of a web server. Its function is to guide AI crawlers directly to a brand’s highest-quality, most structured data: product feeds, API documentation, pricing specifications, and compatibility information. By providing a clean, flat text representation of this data, brands eliminate the friction that causes AI hallucinations and ensure that tools like ChatGPT and Perplexity can accurately parse and cite their catalogs.

The standard operates analogously to robots.txt, which tells search crawlers where to go and where to stay away — but with an additional layer of quality signaling. Rather than simply granting access, llms.txt actively surfaces the most machine readable version of a brand’s data.

Why This Matters Commercially

Dell Technologies was among the first major enterprise brands to publicly deploy a llms.txt architecture, signaling to the market that this is enterprise-grade infrastructure rather than an experimental tactic. Digital agencies implementing llms.txt for eCommerce clients have reported organic traffic increases of up to 25% attributable to improved AI crawlability, not from more human visitors, but from more accurate AI citations driving higher intent referrals.

For eCommerce brands with large catalogs, the compounding impact is substantial. Each product page that an AI model can accurately read and cite represents a potential touchpoint in an autonomous purchase workflow, a topic explored in depth in the Agentic Commerce section.

| Implementation Priority Brands with more than 500 SKUs should treat llms.txt as a Q1 infrastructure priority. The competitive advantage is time-limited: early movers establish AI citation patterns that become self-reinforcing as models update their training data. |

E-E-A-T and Cryptographic Trust: The New Authority Signals

Google’s quality framework has evolved from a reviewer’s checklist into a computational trust engine and cryptographic provenance is now its most powerful signal.

The mass availability of generative AI has made low quality content effortless to produce and increasingly difficult to distinguish from expert writing. Search engines have responded by recalibrating their quality filters dramatically. Google’s E-E-A-T framework, Experience, Expertise, Authoritativeness, and Trustworthiness, has transitioned from editorial guidance into a rigorous computational scoring system.

What E-E-A-T Means in Practice

The Experience dimension has become the most heavily weighted vector in 2026. Algorithms actively look for signals of first hand involvement: original photography with authentic EXIF data, documented operational processes, verifiable human authorship, and lived expertise that diverges from the generic patterns of AI generated prose. Content that could plausibly have been written by an LLM without domain experience is increasingly penalized, regardless of its technical accuracy.

The practical implication is not that AI tools are forbidden, they are widely used and accepted, however that human editing, expert input, and authentic experience signals must be demonstrably woven through all content. AI can accelerate drafting; it cannot substitute for genuine domain authority.

C2PA: Cryptographic Proof of Authenticity

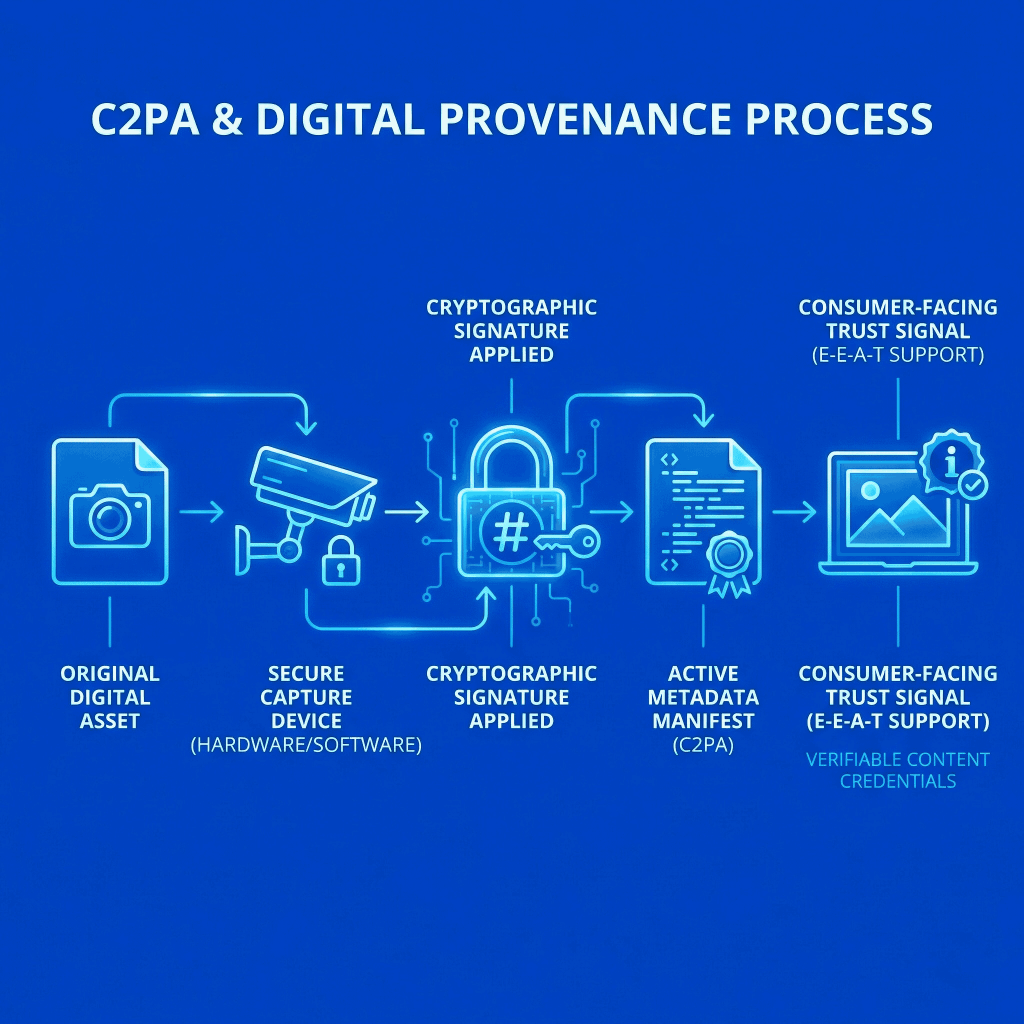

The deeper structural response to synthetic content is the emergence of cryptographic provenance standards. The Coalition for Content Provenance and Authenticity, the C2PA initiative led by Microsoft, Adobe, and Intel, has established a global framework for digitally signing media at the point of origin. A C2PA Content Credential creates a tamper evident chain of custody: any subsequent modification or republication is transparently logged.

Hardware integration has accelerated adoption dramatically. The Google Pixel 10 and Sony’s professional video camera range now embed C2PA signatures natively at capture, meaning the credential is attached before any post processing occurs. This removes the need for retroactive signing workflows.

For enterprise SEO, the consequence is clear. Google has integrated C2PA metadata into its core search ranking systems, using cryptographic trust signals to inform content policy and verify asset authenticity via its ‘About This Image’ feature. In 2026, attaching cryptographic credentials to core digital media is not a transparency initiative, it is a ranking factor. Brands that publish unsigned media are ceding a verifiable trust signal to competitors who do not.

| Invisible Watermarking as a Complementary Layer: Beyond C2PA signatures, invisible digital watermarking, machine readable signals embedded within the image or audio data itself, provides a soft binding provenance mechanism that persists even if metadata is stripped. Brands managing large volumes of visual assets should implement both: C2PA for chain of custody verification and watermarking for persistent identity. |

Agentic Commerce: Competing for Machine Preference

The next competitive frontier is not human attention, it is agent preference. Autonomous AI is already executing purchases on behalf of consumers.

The most structurally disruptive development in the 2026 digital economy is not a search algorithm update. It is the emergence of agentic commerce: a paradigm in which autonomous AI agents observe consumer behaviour, interpret intent, compare products across the entire market, evaluate complex trade offs, including price elasticity, delivery speed, and sustainability metrics and execute purchases with minimal human intervention.

McKinsey estimates that agentic AI capabilities could add between $2.6 trillion and $4.4 trillion annually to the global economy. For eCommerce leaders, this creates an entirely new competitive dimension: brands are no longer competing exclusively for human attention. They are competing for algorithmic selection by agents acting on behalf of the consumers they already serve.

What Agent Readiness Requires

Traditional product detail pages, designed for visual appeal and human persuasion are insufficient for agentic workflows. An AI shopping agent does not respond to lifestyle imagery or emotive copywriting. It processes structured data: compatibility matrices, explicit warranty terms, specification tables, and machine parseable pricing logic. The enterprise brands that win agentic selection will be those whose product knowledge is expressed in formats that agents can compute, not merely read.

At the infrastructure level, APIs are the new distribution channels. eCommerce platforms must expose stable, composable API endpoints that allow external agents to interact with inventory data and execute transactions programmatically. The industry is converging on emerging frameworks, Model Context Protocol (MCP), Agent2Agent (A2A) communication, and Agent Payments (AP2), as the interoperability standards that enable this ecosystem.

The Decision Fit Score: Replacing Conversion Rate

In agentic commerce, the primary performance metric shifts from conversion rate to Decision Fit Score, a measure of how frequently an AI agent identifies a brand’s product as the mathematically optimal solution for a user’s defined constraints. Optimizing for this metric requires a fundamentally different approach to product data management: one focused on precision, completeness, and machine-interpretable logic rather than persuasive narrative.

Security: The Know Your Agent Framework

The delegation of purchasing authority to autonomous software introduces significant security vulnerabilities. Prompt injection, goal hijacking, unauthorized privilege escalation, and shadow data flows are now documented enterprise threats. A single malicious prompt injected into an agentic workflow can manipulate exception-handling processes or expose sensitive customer data.

The appropriate response is a Know Your Agent (KYA) verification framework, analogous to Know Your Customer (KYC) compliance in financial services. KYA systems enable merchants to distinguish legitimate shopping agents from malicious bots, treating AI agents as distinct identities requiring strict credential hygiene and authenticated access controls. Human oversight of critical execution decisions must be maintained through process orchestration platforms, not delegated entirely to autonomous systems.

Proven ROI: The Financial Case for AI Search Investment

The numbers justify urgency. A 14-month case study demonstrates a 687% return on investment from integrated AEO and GEO execution.

The business case for AI search optimization is no longer theoretical. Aggregated campaign data demonstrates that a comprehensive eCommerce SEO and GEO strategy generates an average ROI of 317% over a standard 12 to 18-month investment cycle. The compound effect over time is the critical insight: as organic and AI-driven traffic scales, acquisition costs invert sharply.

| Campaign Stage | Organic Traffic | Leads | Organic CAC | Paid CAC |

| Month 0 (Baseline) | 2,400 | 18 | $1,650 | $320 |

| Month 3 | 3,100 (+29%) | 24 | $1,200 | $318 |

| Month 6 | 5,800 (+142%) | 48 | $680 | $335 |

| Month 9 | 9,200 (+283%) | 89 | $420 | $362 |

| Month 14 | 16,300 (+579%) | 198 | $240 | $412 |

The data above tracks an integrated organic and AI visibility campaign across 14 months. The critical insight is not the traffic growth, impressive as 579% growth from baseline is, however the inversion of cost. Organic cost per acquisition fell from $1,650 to $240. Over the same period, paid media cost per acquisition rose from $320 to $412 as ad fatigue and bidding competition intensified.

Calculated across the full period: a cumulative investment of $91,000 generated $756,000 in attributed organic revenue, a 687% ROI. The bootstrapped software company Tally provides a real-world validation of this model, using GEO tactics to make ChatGPT its number one global referral source.

| The Compounding Advantage: Unlike paid media, which stops delivering the moment budget is withdrawn, AI visibility compounds. Every piece of well structured, cited content increases a brand’s entity footprint, which improves future citation probability, which drives more brand association signals, a self reinforcing loop that paid channels cannot replicate. |

The India Blueprint: Emerging Markets as Innovation Labs

India is not following the global AI search roadmap, it is writing a significant portion of it.

The Indian digital economy offers the world’s most instructive case study in AI search evolution under constraint. With an eCommerce market projected to reach $163 billion by 2026, supported by 350 million online shoppers, India has forced search algorithms to solve problems that the Western internet had not yet encountered at scale.

Multilingual Intent and Phonetic Search

India’s 22 major languages and hundreds of spoken dialects historically defeated exact-match keyword algorithms. The result was not a failure of the market but a forcing function for AI innovation. Modern search systems in this environment must process phonetically spelled transliterations the query ‘blutut sond systam’ resolves accurately to Bluetooth sound systems through semantic rather than literal matching.

Voice search has emerged as the dominant modality in Tier 2 and Tier 3 Indian cities, accounting for over 58% of queries. Because voice queries are inherently conversational, ‘find me the best late-night flights under ₹5,000 from Delhi to Mumbai this weekend’ rather than ‘cheap Delhi Mumbai flights’ — they have accelerated the global shift toward intent-based optimization. What India adopted out of necessity, the rest of the world is adopting by design.

ONDC: The Decentralized Commerce Network

The Open Network for Digital Commerce represents one of the most significant structural experiments in eCommerce globally. By creating a government backed, open source interoperability layer between buyers, sellers, and logistics providers, ONDC actively dismantles the platform monopolies that have concentrated eCommerce discovery in the hands of a small number of aggregators.

For search optimizers, ONDC creates a genuinely decentralized discovery environment. Visibility within the network is determined by catalog quality, pricing competitiveness, and hyper local relevance, not by advertising spend or algorithmic favoritism from a platform whose interests may diverge from the merchant’s. This is the purest existing expression of merit based AI discoverability, and its lessons apply well beyond the Indian market.

Flipkart: Enterprise Agentic AI at Scale

Flipkart’s operational architecture illustrates what enterprise grade AI integration looks like at full deployment. The platform’s search layer supports voice, image, and text inputs across regional dialects, serving conversational shopping experiences to over 10 million customers. Behind the consumer interface, Agentic AI dynamically geocodes unstructured addresses, clusters delivery routes in real time, and processes over 10 million supply chain decisions daily.

The merchant side impact is equally significant. AI-powered listing software enabled a Jaipur based seller to map their entire product catalog for international markets in minutes, a process that previously required days. This democratization of catalog optimization is a preview of the operational transformation available to enterprises that embed AI into their content and data workflows.

Visual AEO: Social Platforms as Search Infrastructure

TikTok, Instagram, and YouTube are not social networks with search functionality. They are search engines with social features.

The definition of a search engine has expanded irreversibly. YouTube, receiving more than 48.6 billion monthly visits, is now critical search infrastructure, feeding structured content directly into Google AI Overviews and serving as a highly authoritative citation source for LLMs. TikTok and Instagram function as primary discovery engines for younger demographics, capturing commercial query volume that would historically have gone to Google.

This convergence demands a Visual AEO framework: an optimization approach that treats social content with the same structural discipline previously reserved for web pages.

How to Optimize for Visual AEO

The underlying mechanics differ from text based AEO, but the governing principle is identical: structure content so that algorithms, in this case, platform recommendation engines and LLM crawlers can easily categorize, index, and surface it in relevant contexts.

For YouTube, this means meticulous time-stamped chapter markers. Google Search extracts these directly as clickable ‘Key Moments’ within the search results page, giving structured video content text search visibility. Video scripts should be engineered to deliver a direct answer within the first few seconds, mirroring the hook-first structure that aligns with how users phrase queries to AI chatbots. Content that answers a specific question in its opening lines is the content that AI systems can synthesize and cite.

Across all social platforms, on-screen text, comprehensive captions, and metadata must be treated as indexable signals, not creative afterthoughts. Platform algorithms assign discoverability based on on-platform engagement signals: saves, shares, and watch time. But LLMs cite these platforms as sources, meaning structured metadata also determines whether social content is incorporated into AI-generated answers on external platforms.

Measurement: Retiring Legacy KPIs for AI Visibility Metrics

Rank tracking is obsolete. In a world where 60% of searches produce no clicks, position one is often invisible to the only metric that matters: revenue.

The KPI infrastructure that governed search marketing for two decades, SERP positions one through ten, organic traffic volume, and page level sessions, has been rendered insufficient by the zero-click reality. Organizations still reporting primarily on rank are measuring a proxy that no longer reliably correlates with business outcomes.

Share of Voice: The Primary AI Metric

The replacement metric is Share of Voice (SoV): the percentage of AI generated answers, across a defined set of target prompts — that cite or mention a brand. Top-performing enterprise brands in competitive verticals typically achieve 15% to 30% SoV. This metric captures what matters: not whether a page ranks, but whether a brand is present in the AI-mediated conversations that precede purchase decisions.

Alongside SoV, the Decision Fit Score, measuring how often an AI shopping agent selects a brand’s product as the optimal solution, becomes the agentic commerce analogue of conversion rate. These two metrics together provide a complete picture of AI-era marketing performance.

The AI SEO Technology Stack

A new generation of software has emerged to track these metrics at enterprise scale. Semrush and Visalytica lead on AI Visibility Index tracking and prompt level brand presence monitoring. Profound provides SOC 2 Type II compliant LLM visibility analytics suited to regulated industries. Surfer SEO and Scalenut focus on NLP based content scoring and real-time entity density recommendations. Alli AI enables automated, large scale on-page optimization with live deployment capabilities.

The common thread is that these tools operate at the prompt level, tracking what specific AI-generated responses say about a brand, not merely whether a page appears on page one of a search results list. Organizations that have not yet invested in this tooling are operating without visibility into the channel that is increasingly responsible for high-value discovery.

The CXO Strategic Action Plan

Five imperatives, sequenced for maximum compounding impact across the 12-month horizon.

The transition to AI-mediated search is already underway. The following roadmap translates the strategic insights of this white paper into executable priorities.

1. Mandate Machine Readable Content Architecture

Shift content strategy from long-form narrative prose to modular, atomic, structured answers. Implement comprehensive JSON-LD schema markup across product, FAQ, review, and article content types. Deploy an llms.txt directory to guide AI crawlers to high quality structured data. Aim for AEO foundations within an 8-week initial sprint.

2. Build for the Agentic Funnel

Restructure eCommerce infrastructure for machine to machine commerce. Expose stable, composable APIs enabling external agents to query inventory and execute transactions. Build internal Catalog Intelligence Agents to maintain data accuracy at scale. Implement Know Your Agent security protocols to distinguish legitimate purchasing agents from malicious bots.

3. Invest in Cryptographic Provenance

Adopt C2PA content signing for all core digital media assets. Implement invisible watermarking as a secondary provenance layer. Ensure device level credential capture where hardware supports it. Treat cryptographic authenticity as a ranking input, not a compliance exercise.

4. Transition KPIs to AI Visibility Metrics

Integrate LLM monitoring platforms to track Share of Voice across target prompt sets. Establish baseline SoV measurement before optimization work begins to accurately measure impact. Introduce Decision Fit Score as a primary metric for agentic commerce performance. Report both alongside, not instead of revenue attribution.

5. Unify Search and Social via Visual AEO

Treat YouTube, TikTok, and Instagram as primary search infrastructure. Enforce structured metadata, chapter timestamps, and front loaded answer formats across all video content. Audit social assets for LLM crawlability and citation potential. Align social content calendars with target AI prompt clusters.

The organizations that will dominate the next decade of digital commerce are not necessarily those with the largest advertising budgets. They are the ones whose data is most precisely structured, whose provenance is most cryptographically trusted, and whose brand narrative is most consistently embedded in the cognitive workflows of the AI systems that mediate discovery. The infrastructure built today is the competitive moat of 2030.

Frequently Asked Questions

Do eCommerce brands still need traditional SEO in 2026?

Yes, however it is no longer sufficient in isolation. Traditional SEO builds the foundational domain authority and entity signals that AEO and GEO build upon. Brands that abandon traditional SEO lose the credibility infrastructure that AI systems use to evaluate citation worthiness. The correct model is integration: SEO establishes authority, AEO captures deterministic features, GEO wins LLM narratives.

How long does AEO implementation take?

Foundational AEO, structured content formatting, JSON-LD schema deployment, and entity consistency audits can be implemented in a 4 to 8-week sprint for most enterprise websites. Full catalog optimization for large product ranges typically requires 3 to 6 months. Results become visible in AI Overviews and featured snippets within 4 to 12 weeks of implementation, though this varies by competitive landscape.

What ROI should we expect from AEO and GEO investment?

Aggregated campaign data indicates an average ROI of 317% over 12 to 18 months, equivalent to approximately a 4.17× return on investment. Mid-market e-commerce brands consistently achieve higher returns — 412% to 447% — due to agile execution structures. Individual campaigns with strong execution, as evidenced by the 14-month case study detailed in this paper, have demonstrated ROI exceeding 600%.

What is an llms.txt file and does our brand need one?

An llms.txt file is a Markdown formatted directory at your server root that guides AI language model crawlers toward your most structured, machine-readable data: product feeds, API documentation, pricing logic, and specifications. It prevents AI hallucinations caused by JavaScript rendered pages that crawlers cannot parse reliably. Any eCommerce brand with more than 100 SKUs seeking AI discoverability should treat this as essential infrastructure.

How do we protect against malicious AI agents?

Implement a Know Your Agent (KYA) verification framework. This involves assigning distinct identity credentials to AI agents interacting with your systems, implementing access control tiers that limit what unauthenticated agents can execute, and using process orchestration platforms to maintain human oversight over high value transactions. Monitor for anomalous agent behaviour, including unusual query patterns, privilege escalation attempts, and bulk data requests — as indicators of malicious intent.

When will AI search traffic surpass traditional organic traffic?

Current trajectory models project that AI search referred traffic will surpass traditional organic search traffic globally by 2028. However, the tipping point varies significantly by industry vertical and audience demographic. eCommerce categories with high Gen Z purchase intent, electronics, fashion, travel, and software are likely to see this crossover earlier, potentially by 2027.

Glossary of Key Terms

AEO (Answer Engine Optimization)

The practice of structuring content for extraction by deterministic AI search features, including Google AI Overviews, featured snippets, and voice assistants.

Agentic Commerce

A commerce model in which autonomous AI agents evaluate, negotiate, and execute purchases on behalf of consumers with minimal human intervention.

C2PA (Coalition for Content Provenance and Authenticity)

A global standard enabling cryptographic signing of digital media at the point of origin, creating a tamper-evident chain of custody.

Decision Fit Score

A metric measuring how frequently an AI agent identifies a brand’s product as the optimal solution for a consumer’s defined requirements. The agentic-commerce equivalent of conversion rate.

E-E-A-T

Google’s quality evaluation framework: Experience, Expertise, Authoritativeness, and Trustworthiness. Functions as a computational content quality filter in 2026.

GEO (Generative Engine Optimization)

The practice of ensuring a brand is accurately and consistently represented within AI-generated narratives produced by large language models.

KYA (Know Your Agent)

A security verification framework that treats AI agents as distinct identities, enabling merchants to distinguish legitimate shopping agents from malicious bots.

llms.txt

A Markdown formatted directory file that guides AI language model crawlers toward a brand’s most structured, machine-readable data.

MCP (Model Context Protocol)

An emerging interoperability framework enabling structured communication between AI models and external APIs, facilitating agentic commerce workflows.

ONDC (Open Network for Digital Commerce)

An Indian government backed, open-source eCommerce interoperability network designed to democratize commercial discovery for smaller merchants.

Share of Voice (SoV)

The percentage of AI-generated answers for a defined set of target prompts that cite or mention a specific brand. The primary KPI for LLM optimization.

Zero-Click Search

A search query resolved entirely within the search engine or AI platform, producing no outbound click to an external website.

About This White PaperThis white paper synthesizes data from publicly available market research, aggregated campaign performance tracking, and documented industry case studies. Statistical figures reflect conditions as of early 2026. Metric ranges represent aggregated benchmarks across enterprise eCommerce verticals. Individual results will vary based on market, execution quality, and competitive landscape.

About PracticeNext: PracticeNext provides comprehensive AI Brand Visibility and SEO service that help business move beyond keywords and be the Answer

Pingback: AI-Native Digital Growth System: Unified OS for Enterprise I PracticeNext